|

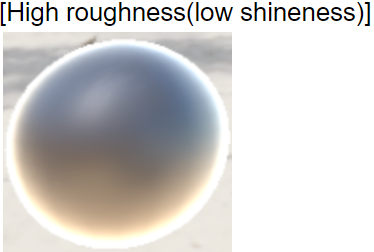

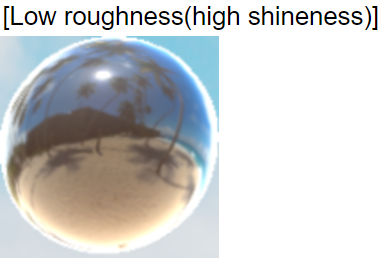

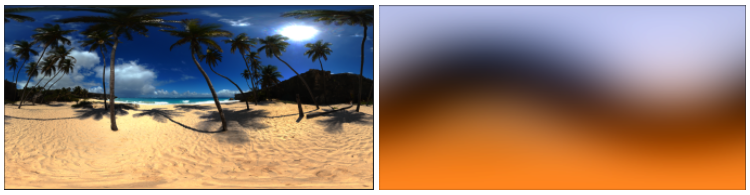

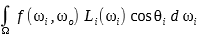

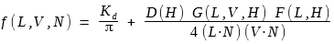

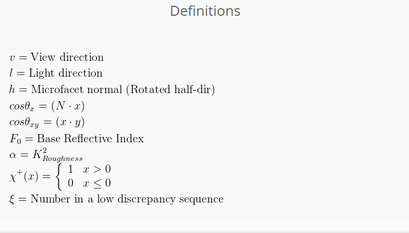

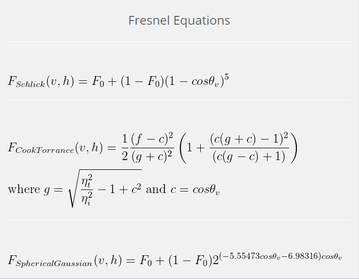

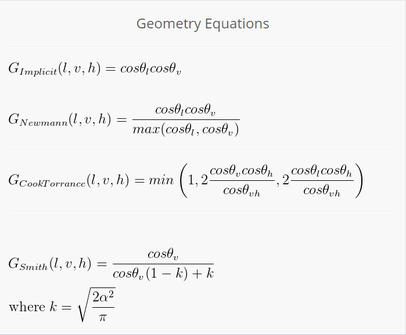

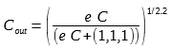

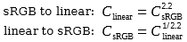

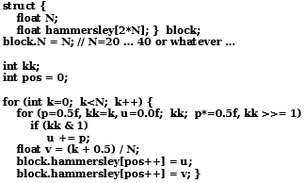

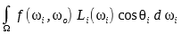

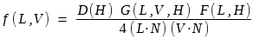

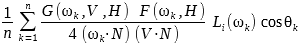

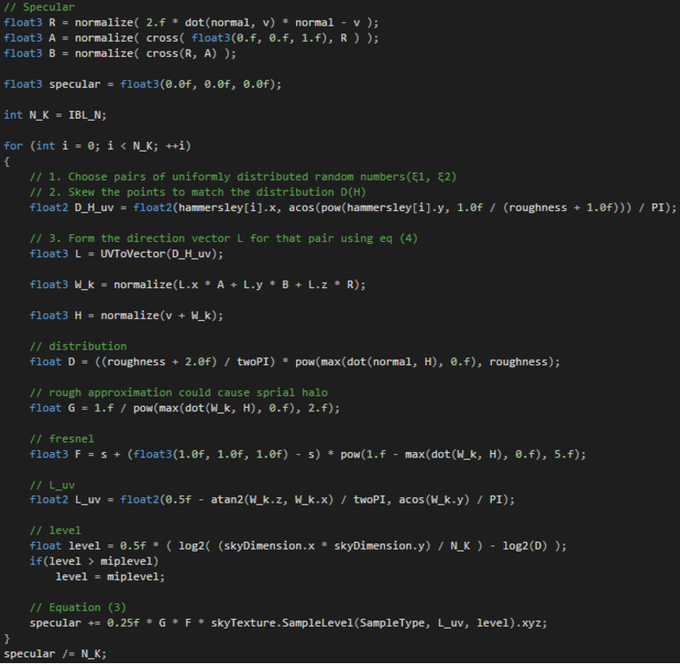

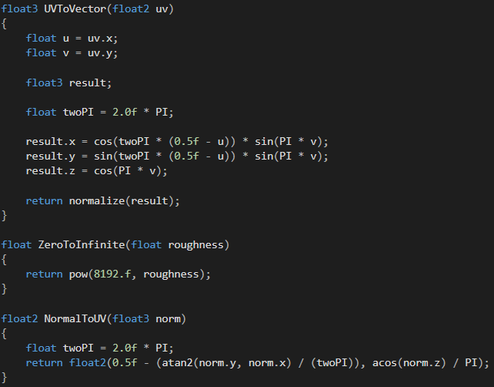

5/4/2017 0 Comments Image Based Lighting Image Based Lighting (aka, IBL) is a 3D rendering technique which involves capturing an omnidirectional representation of real-world light information as an image, typically using a specialized camera. This image is then projected onto a dome or sphere analogously to environment mapping, and this is used to simulate the lighting for the objects in the scene. This allows highly detailed real-world lighting to be used to light a scene, instead of trying to accurately model illumination using an existing rendering technique. IBL often uses High Dynamic Range (aka, HDR) imaging for greater realism. This technique used in movies, games, and wide range of area which requires to render realistic scenes in real time. My Implementation Since IBL requires physically based lighting calculation, there are two big terms you need to implement. The diffuse term and the specular term. In this project, I used two different texture maps. One is Irradiance map for diffuse calculation(right image below), and the other is HDR map for specular calculation(left image below). You can use pre-calculated irradiance map or generate the map for yourself. Lighting |

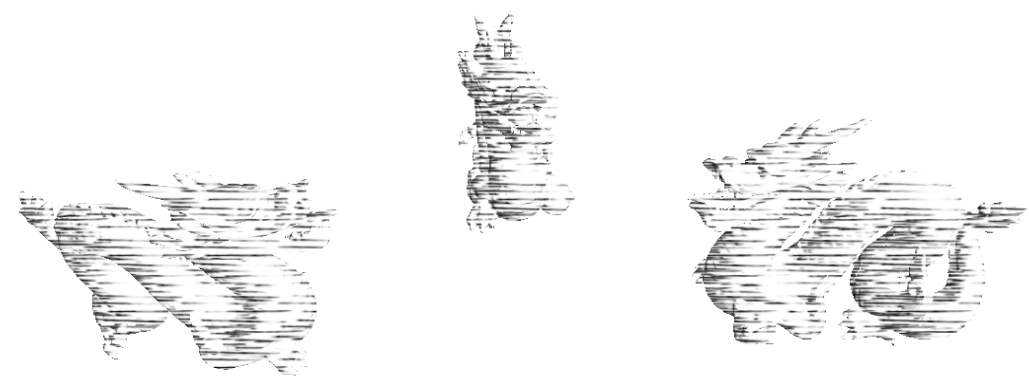

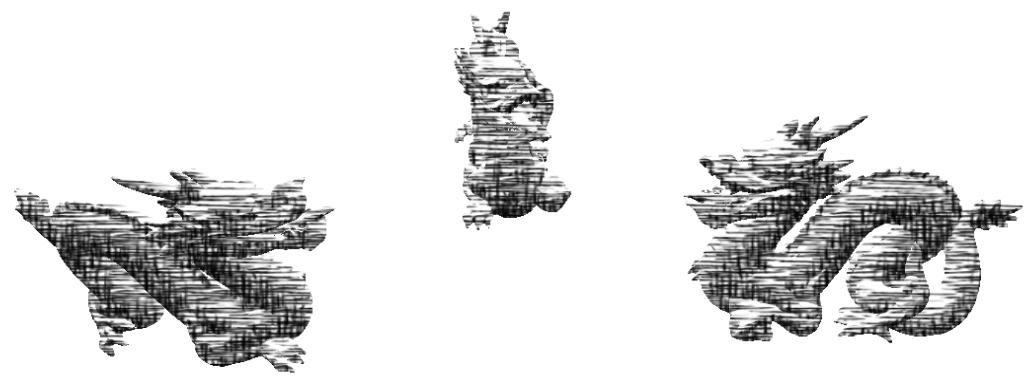

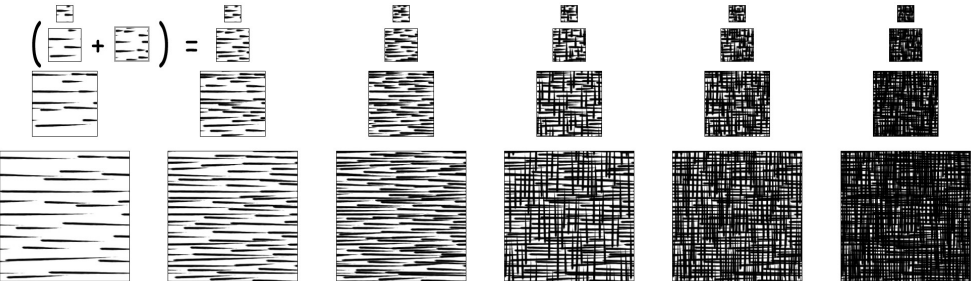

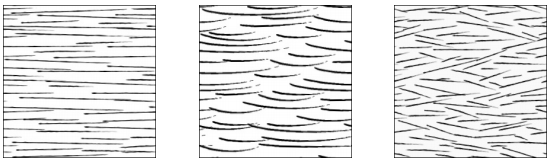

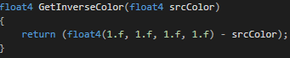

| As you can see, my implementation is quite simple. I used dot product of light vector and normal vector of the surface as its tonal value. Since I'm using 6 texture, I converted the result of dot product in range of [-1, 1] to range of [0,6]. This converted value 'Factor' will be used as blending weight of those textures. By doing this, I could determine how much strokes should be displayed on the surface based on the light position. Although this way of implementation highly rely on TAMs' image, the result could be different from what you've expected. In that case, you can try scaling the image to simply manipulate the width of strokes or replace the entire images as totally different one like below. |

5/2/2017 0 Comments

Image Space Edge Detection

There are dozens of ways to detect and render edges in real-time. They all fit into roughly four categories: hardware, image-space, object-space, and miscellaneous methods. Hardware methods are quick and simple to implement, but with severe limitations. Image-space methods are a bit more involved and provide medium quality results. Object-space methods provide maximum quality and customizability, but often at the cost of speed. The miscellaneous category is a catch-all, but these methods tend to be quick and limited like hardware methods.

In this post, I'm going to explain about Sobel Edge Detection, one of the simplest way of creating nice edge around the object. All you need is the depth buffer.

For edge detection, image processing techniques typically apply convolutions to various properties of images or specialized images to generate gradients, a vector representing the local maximum change. In image processing, convolutions take the form of component-wise matrices called kernels or operators. These operators store numbers in each cell which are multiplied by some numerical property of the equivalent pixel in the input image. This operation is applied to every pixel in the input image, with optional special cases at the image borders where input data may not be available. The resulting output image data can be used in further calculations or displayed directly.

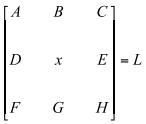

For each pixel (x) there are surrounding pixels (A, B, C, D, E, F, G, and H) except at the extreme borders of the viewport. The layout looks like below:

In this post, I'm going to explain about Sobel Edge Detection, one of the simplest way of creating nice edge around the object. All you need is the depth buffer.

For edge detection, image processing techniques typically apply convolutions to various properties of images or specialized images to generate gradients, a vector representing the local maximum change. In image processing, convolutions take the form of component-wise matrices called kernels or operators. These operators store numbers in each cell which are multiplied by some numerical property of the equivalent pixel in the input image. This operation is applied to every pixel in the input image, with optional special cases at the image borders where input data may not be available. The resulting output image data can be used in further calculations or displayed directly.

For each pixel (x) there are surrounding pixels (A, B, C, D, E, F, G, and H) except at the extreme borders of the viewport. The layout looks like below:

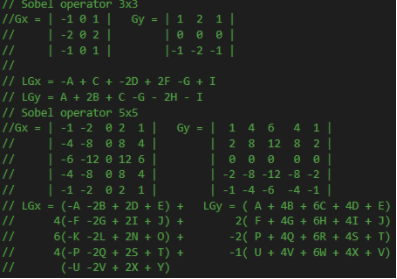

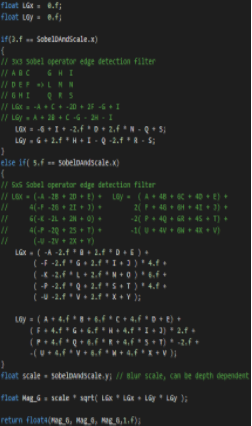

L represents the input pixels that will be used in conjunction with a kernel. Since a pixel is typically made up of color and alpha transparency information, the user must decide what property of the input image pixels he will use. You can use this operator wherever you can. But in this example, I used Depth Buffer as I mentioned above because depth shows the most trustworthy output. Sobel operator can have various dimension. 3x3, 5x5, 7x7 whaever.

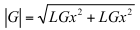

By multiplying this operator to the target pixel and pixels around the target pixel component-wisely, you will be able to get the direction of the gradient. LGx, LGy. But to detect the edge, you need the magnitude of the gradient.

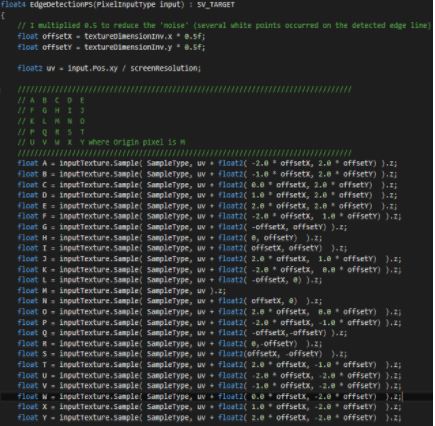

The gradient magnitude can be used directly in edge detection. By drawing black pixels where the gradient magnitude is greater than or less than a user-defined value, edges will be rendered. The process of choosing which pixels to draw based on the gradient (or any value) is called thresholding. I'll also post my implementation below. It is extremely easy to follow.

Author

Hyung Jun Park